A continuous integration server verifies that all of the currently committed changes play well together and reduces the elapsed time between a team member committing a change and finding out it leaves the build in a poor state. The faster we find out about a defect or unstable build, the fresher the changes are in our minds and the faster we can fix it.

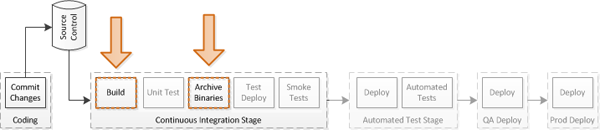

Delivery Pipeline – Focus of Current Post

This is the second post in a multi-part series on my Continuous Delivery pipeline project. The first post discussed Continuous Delivery, defined the process I am building, and outlined the technology selections I’ve made for the project. In this post I will begin setting up Continuous Integration for the project using Jenkins as a build server, MS Build to execute builds, and BitBucket to serve as the source code repository.

Server Setup

Prior to setting up the build server, I added a repository on BitBucket to serve as the central code repository, completed the MVC Music Store tutorial (full code available on Codeplex), and pushed the commits to the remote repository.

There are three major differences between my version of the database and the one on MSDN:

- My copy uses a second sdf (SQL CE) database for authentication instead of SQL Express

- I’m using the Universal Providers for ASP.Net membership (Install-Package System.Web.Providers)

- I have included the sdf files in the ASP.Net project (not something you would want to do in a production environment)

My server is a Windows 2008 R2 VM with 2GB of RAM assigned to it and a single 32GB harddrive. It was a clean, sysprepped image with no additional software installed.

Installation

To get started on the new build server VM, I’ve installed the following software:

- Chrome – because IE was annoying me

- UnxTools – Extra tools Jenkins needs that mimic several Unix commands

- <a href=http://jenkins-ci.org/"" title=“Jenkins Downloads”>Jenkins – The installer will install the JRE and latest version of Jenkins

- Jenkins – .Net Framework 4 (Check windows updates afterwards)

- Mercurial – the windows version will install with tortoiseHg

With a couple reboots along the way, all the packages are installed with little extra effort.

Jenkins Configuration

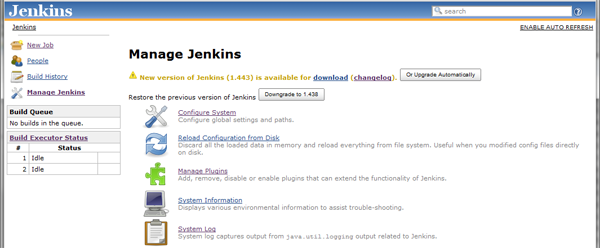

With the packages above in place, I can start up Jenkins and began configuring it. To start Jenkins, run java -jar “C:Program Files (x86)JenkinsJenkins.war” and then point a browser to http://localhost:8080 to access the dashboard. There are also instructions to set up Jenkins as a service.

Note: Jenkins somehow magically set itself up as a service on my system (or I was really low on coffee when I was initially poking around it), so if you are following along on your own install, you may want to try accessing the dashboard prior to running the jar to see if it’s already running.

The side menu offers a link to the server settings (Manage Jenkins), and from there I get a list of sub-menus in the main area that includes “Plugins”. To start with I’ll install the plugins for Mercurial, Twitter, and MS Build from the “Available” tab on the plugins screen. After installing, system-wide options for the plugins are added in the system configuration screen (Manage Jenkins – Configure System).

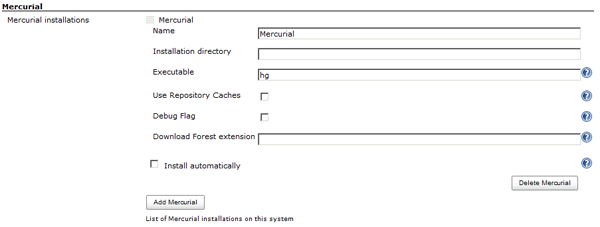

Mercurial

The mercurial configuration is straightforward and offers a reasonable set of defaults, so of course I changed it. I added the path for the mercurial binaries to my PATH environment to make command-line access easier outside of the build server and then modified the mercurial configs in the build server to reflect that change.

My simplified configuration is the name of the executable and all blanks for the rest of the values.

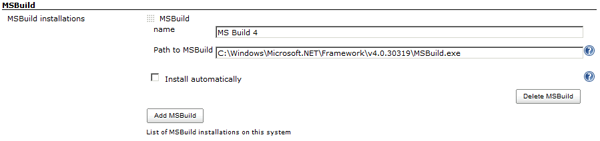

MS Build

The latest MS Build executable is installed as part of the .Net framework installation. In the Jenkins server setup, I add an MS Build item, naming it with it’s version number (I can add separate, named configurations for each version later if I’m so inclined) and pointing the path to “C:WindowsMicrosoft.NETFrameworkv4.0.30319MSBuild.exe”.

Note: You can define multiple MS Build executables if you have projects that run on different versions. Naming them clearly will help when you later need to select the appropriate MS Build exe to build with

As I pointed out in the first post, I decided I would use twitter for status notifications, as twitter is more widely accessible and won’t clog up my inbox (the downside being limited status information). There is important additional information on the plugin page for setting it up.

Setting up the CI Job

With the server configured, I can move on to setup the initial CI build job. Initially, this job will be responsible for picking up changes from mercurial, executing the build, and reporting the results.

- Select “New Job” from top left menu

- Select “Build a free-style software project”

- Enter a Name

- Enter Details

- Select Mercurial for SCM and enter URL for the repository (I am using bitbucket for this example) as well as selecting repository browser (bitbucket)

- Initially I’ll leave build triggers not defined

- Configure MS Build by specifying the

*.sln - check the “twitter” checkbox at bottom

- Run build by clicking “Build Now” at top left

- Start debugging build problems

Note: Though I didn’t show it here, there is also an advanced option under the mecurial settings called “Clean Build”. This will clean the workspace before each build so binaries and test results won’t pollute later builds

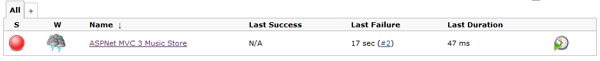

Dashboard View of Failed Build

My first attempted build fails. The details are available by opening the build and clicking the Console Log link in the left menu (which changes to reflect the context of the screen we are on). The console log displays the raw output of the commands executed during the build.

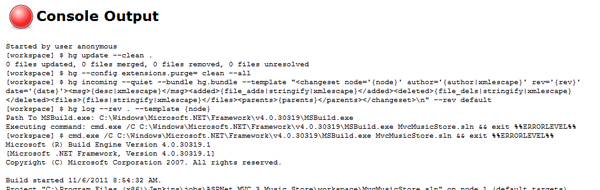

Looking at the Console Log for a Failed Build

Here are the errors I had to work through in order to get the initial build to work. Some of them were me missing feedback from the system or incorrect configurations.

- Error (twice), console log told me hg wasn’t recognized

- hg hadn’t actually installed the first time due to windows updates being in middle of another install

- I rebooted to finish windows update, installed tortoisehg, rebooted to have clean startup (and paths), and the issue was corrected

- Failure – In the console log it complained about not being able to find the MS Build executable

- Returned to project settings and switched MS Build option from (default) to the one I had configured above in global settings

- Error MSB4019: The imported project “C: … Microsoft.WebApplication.targets” was not found

- Options:

- Install VS 2010 Shell (http://www.microsoft.com/download/en/details.aspx?id=115)

- Install Visual Studio

- Copy folder from existing install (C:Program FilesMSBuildMicrosoftVisualStudiov10.0WebApplications)

- I went with option 1 and ran windows updates again before continuing

- Options:

- error CS0234: The type or namespace name ‘Mvc’ does not exist

- Would have been fixed if I had installed VS (oh well)

- Download and install MVC: http://www.microsoft.com/download/en/details.aspx?displaylang=en&id=4211

At this point the initial build runs successfully.

Refining the Setup

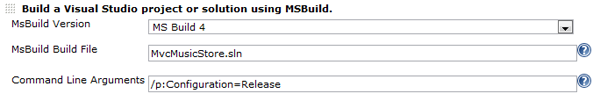

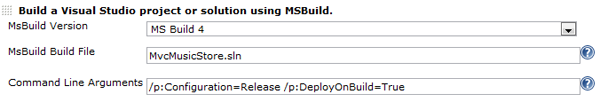

After getting the initial build setup, it’s time to add some refinements. First up is switching the build to run in release mode by adding "/p:Configuration=Release" to the command line arguments in the MS Build section.

Jenkins Configuration – Adding Release to Build Args

Now that I have it working, I also want to add the option to automatically run when new changes are committed to source control. The Build Triggers section of the job configuration controls how jobs are triggered, so I’ll select the “Poll SCM” option to poll my source control repository. A value of "*/5 * * * *" will set it to check every 5 minutes (which may be overkill given how few updates I will be making over the course of this project, but oh well).

Jenkins Configuration – Defining Polling for Build Trigger

Note: Timing uses Unix cron-style values. Basically the string is used as a test against the current time to see if a particular step is to be run, so 5 * * * * would run only if the minutes value was a 5, while */5 * * * * runs if it is divisible by 5.

Capturing the Results

The last step of the build stage is to capture the resulting binaries and website pages so they can be deployed consistently to other environments. The addition of WebDeploy to Visual Studio and IIS has made web deployment easy* to manage, which will simplify getting an archive of the results and my deployment scripts later.

Note: ***Web Deploy has made this really easy IN THEORY. This is the topic of a later post in the series and is also the reason screenshots and check-ins for the early stages may reflect dates in early November and these posts are being written in December.

By default, when I create a deployment package I will get a folder of all the cshtml, dll, and so on files I need to run the site. In the project properties for the website, there is a build option to zip these files as a package after building it, which will simplify archival even further.

Project properties, select the tab for “Package/Publish Web” and check the “Create deployment package as zip” option

The last piece is to tell MSBuild I want to build the web deployment package. In the MSBuild step of the CI job, I add the command-line flag of "/p:DeployOnBuild=True", which will be passed on to the individual projects in the solution to act on if they understand it (which the web project will and the unit test project will not, handy).

Looking at the Console Log for a Failed Build

At this point running another build fails, with multiple errors complaining about Package steps (like CheckAndCleanMSDeployPackageIfNeeded) failing. The solution is to install the WebDeploy 2.0 refresh package on the server, located here. Once this is installed, the build is able to complete successfully.

Now that I have a nice, tidy package of the deployable build, I need to put it somewhere for longer term use. In the Post-Build Actions of my job configuration, there is an option to archive artifacts from the build. Checking this box and entering the path for the zip file from the Project Properties screen (objDebugPackageMvcMusicStore.zip) tells Jenkins to archive that zip file as the artifacts from each build.

After executing another successful build, we can see the build server has archived the zip file (above). If I click that zip file I’ll be prompted to download it to a local machine.

Test the Package

We’re not done until we test the package. Luckily testing a WebDeploy package is pretty easy, all we have to do is open the IIS configuration screen, select the default web site, and then use the import button on the right side of the interface to import the zip file. This imports all of the files, sets up the application, and gives me a running website. There is more information on WebDeploy in this post by ScottGu and we will get more in depth with it in later steps.

Note: It’s interesting to note that this is where I found the first bug in MVC Music Store. The images in the CSS file were defined assuming the application was at the root level (fix), as were the navigation paths in the header (fix).

Next Steps

We now have a working Continuous Integration stage that will detect checked in changes, build them, and create a deploy package. The next step is to execute and capture the results for the unit tests, however before capturing the results we need to have unit tests, and to have unit test we have to make the Music Store tutorial code testable. The next post will cover that conversion. It’s interesting to note, especially if you are one of those people that believe unit tests to be wasteful, that the very first controller I put under test in this very public, very widely deployed, open source project contains a defect.

My roles have included accidental DBA, lone developer, systems architect, team lead, VP of Engineering, and general troublemaker. On the technical front I work in web development, distributed systems, test automation, and devop-sy areas like delivery pipelines and integration of all the auditable things.

My roles have included accidental DBA, lone developer, systems architect, team lead, VP of Engineering, and general troublemaker. On the technical front I work in web development, distributed systems, test automation, and devop-sy areas like delivery pipelines and integration of all the auditable things.